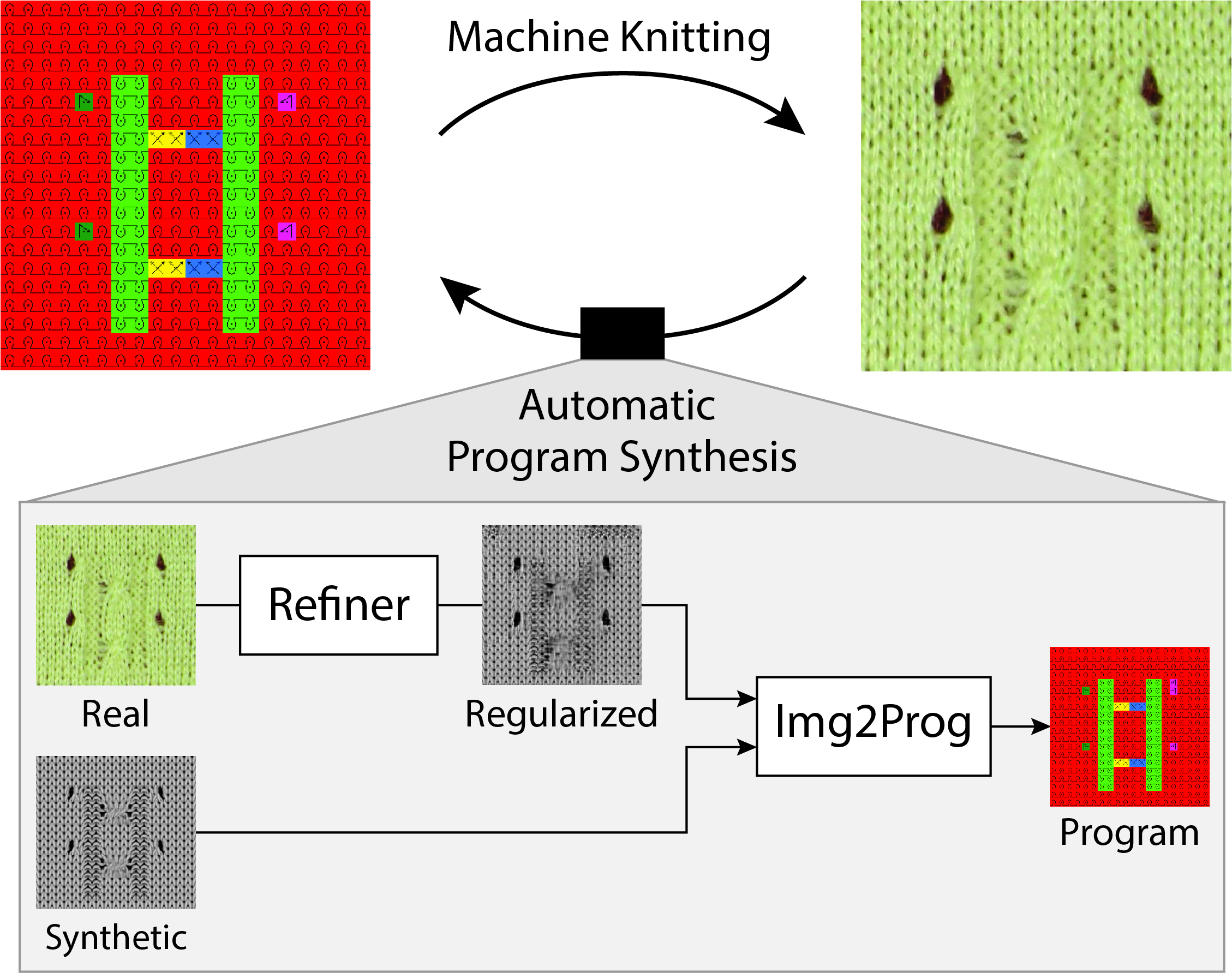

Illustration of our new problem of automatic machine instruction generation from a single image. On the top-left is an example instruction map which gets knitted into a physical artifact shown on its right. We propose a machine learning pipeline to solve the inverse problem by leveraging synthetic renderings of the instruction maps.

Motivated by the recent potential of mass customization brought by whole-garment knitting machines, we introduce the new problem of automatic machine instruction generation using a single image of the desired physical product, which we apply to machine knitting. We propose to tackle this problem by directly learning to synthesize regular machine instructions from real images. We create a cured dataset of real samples with their instruction counterpart and propose to use synthetic images to augment it in a novel way. We theoretically motivate our data mixing framework and show empirical results suggesting that making real images look more synthetic is beneficial in our problem setup. We make our dataset and code publicly available for reproducibility and to motivate further research related to manufacturing and program synthesis.

Accepted at ICML 2019.

@InProceedings{pmlr-v97-kaspar19a,

title = {Neural Inverse Knitting: From Images to Manufacturing Instructions},

author = {Kaspar, Alexandre and Oh, Tae-Hyun and Makatura, Liane and Kellnhofer, Petr and Matusik, Wojciech},

booktitle = {Proceedings of the 36th International Conference on Machine Learning},

pages = {3272--3281},

year = {2019},

editor = {Chaudhuri, Kamalika and Salakhutdinov, Ruslan},

volume = {97},

series = {Proceedings of Machine Learning Research},

address = {Long Beach, California, USA},

month = {09--15 Jun},

publisher = {PMLR},

pdf = {http://proceedings.mlr.press/v97/kaspar19a/kaspar19a.pdf},

url = {http://proceedings.mlr.press/v97/kaspar19a.html},

}

Our work was done with acrylic yarn (Tamm Petit 2/30). However, we welcome all yarns, especially natural yarn (with better environmental impact and properties). Fortunately, the media attention around this project has brought us a chance to try other types of yarn.

We thank Steven and Nicole from Meadowgate Alpacas for their Alpaca yarn donation from Beaverdam, VA.

The more diverse yarn, the merrier.